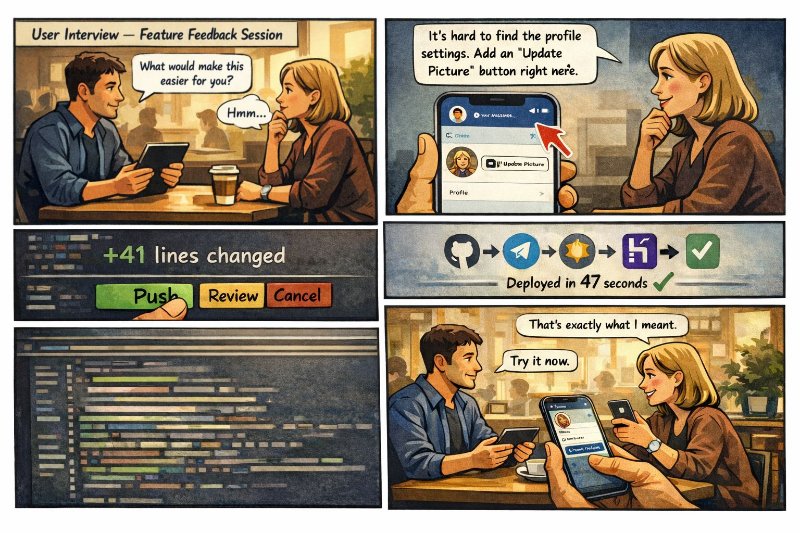

Most product teams still treat feedback and shipping as two different worlds.

A user asks for something. The PM writes it down. It goes to backlog. Then planning, sprint, release.

I wanted to compress that loop.

So I built a Telegram control bot that lets me send a text or voice note, turn it into structured code edits, and push changes to a live app. For me, this is where AI app development starts to feel genuinely new.

The product loop just got shorter

Imagine talking to a user in a coffee shop. They describe a rough edge in your app. You record it in Telegram.

The bot transcribes the voice message, asks Claude to prepare code changes, shows a diff preview, and waits for approval. One tap later, GitHub gets a commit and Heroku deploys it.

That is not a prototype fantasy. That is a real product workflow.

1. How the workflow works

- Telegram receives a text or voice instruction from the product owner.

- Whisper, routed through OpenRouter, turns voice into clean text.

- Claude reads the instruction together with the relevant repo context and returns structured file edits.

- A control service applies those edits in a cloned copy of the repository, not in the live app.

- After approval, the bot commits, pushes to GitHub, and Heroku auto-deploys the new version.

2. A real product moment

This gets interesting when the feedback is specific enough to be actionable.

A user might say: "The whitelist is too restrictive. Let anyone ask one free question, then show a button to request full access."

Instead of translating that into tickets later, the system can turn it into a controlled change while the conversation is still fresh. That is not just convenience. It is product memory.

What the bot is actually good at changing

- UI tweaks like hiding advanced inputs, renaming buttons, or changing defaults.

- Business rules like free usage limits, whitelists, thresholds, and simple validation logic.

- Telegram bots with AI flows, including messages, buttons, and lightweight access rules.

- Small internal tools where fast AI workflow automation matters more than perfect ceremony.

3. Why this matters for PMs, founders, and tiny teams

The big shift is not that AI writes code. The big shift is that the gap between user feedback and production is collapsing.

For PMs, it means less waiting between learning and testing. For founders, it means fewer handoffs. For small teams and AI consulting projects, it means faster loops around truth.

When the user can verify a change minutes later, you reduce interpretation loss. That is huge.

Safety rules I would never skip

- Always edit a cloned repo, never the currently running code.

- Ask the model for structured JSON edits, not vague prose.

- Show a summary and diff preview before every push.

- Keep deployment inside the normal GitHub to Heroku flow.

- Limit access to one admin chat or a very small trusted group.

4. Where this breaks in real life

This kind of setup is powerful, but it is not magic.

Ambiguous feedback still creates bad changes. Weak prompts still produce weak diffs. And if the repo context is messy, the model will confidently edit the wrong place.

The answer is not to slow everything down. It is to add better guardrails, better prompts, and cleaner repo boundaries. Real AI automation still needs taste.

What you need to build it today

- Whisper or another speech-to-text layer for voice notes.

- Claude or a similar model for structured code editing.

- Git access through a deploy key or GitHub token.

- A deploy target such as Heroku, Railway, or another auto-deploy platform.

- A simple control bot with approval states, logs, and clear rollback paths.

5. The bigger shift

I think we are moving from "AI helps engineers code" to "AI shortens the distance between feedback and shipped product."

That changes how product interviews feel. It changes how fast experiments happen. It even changes how you think about backlog.

You do not need this loop for every feature. But once you feel it on the right kind of change, it is hard to go back.

Where this gets useful fast

- Internal admin panels and ops tools.

- Bot products with clear message flows and access rules.

- MVPs where the cost of waiting is higher than the cost of reworking.

- Products where feedback is concrete and immediately testable.

Final reflection

I like this idea not because it is flashy, but because it respects momentum.

The best product conversations are warm, specific, and fragile. If you can turn that energy into a live change before it cools down, you learn faster and build with less fiction.

That is the part that feels new to me. Not AI as autocomplete. AI as a bridge between user truth and working software.